- Home

- > The Marketix Blog

- > SEO

A Guide to Robots.txt for SEO

Have you ever wondered how search engines manage to find and organise all the web pages on the internet?

Whether you're an expert in SEO or just beginning, this guide will help you understand Robots.txt, an important tool for managing your website's visibility online. We'll explain it in simple terms, showing you how to use it to your advantage.

Let's begin exploring Robots.txt and learn how it can improve your website's presence in search results.

What Is Robots.txt?

Robots.txt is a file used on websites to guide web crawlers, which are automated tools that browse and index web content for search engines. This file, usually found in the root directory of a website, tells these crawlers which parts of the site they should or should not visit and index. By doing so, it helps control which parts of the website appear in search engine results.

The concept of Robots.txt came about in 1994 as part of the Robots Exclusion Protocol. Its creation was a response to the growing need for website owners to manage how their content was accessed and catalogued by web crawlers during a period of rapid internet growth.

For instance, on a business website that offers a variety of products and services, robots.txt can be configured to ensure that search engines focus on indexing the main product pages and useful information, rather than internal or private sections of the site. This helps improve the website’s visibility in search results, making sure that the most relevant and important parts of the site are easily found by potential customers.

Key Components of Robots.txt

The key components of a Robots.txt file are user-agents and directives, which together help control how web crawlers interact with your website. User-agents are the names of these web crawlers. Each one, like Googlebot or Bingbot, behaves differently. By identifying these user-agents in your Robots.txt file, you can tailor how each crawler interacts with your site.

Directives are the instructions you give to these crawlers. For example, the "Disallow" directive tells crawlers not to access certain parts of your site, keeping those areas private and off search engine results. The "Allow" directive does the opposite, granting crawlers permission to visit and index specific areas of your site. There's also a "Crawl-Delay" directive, which sets a time gap between crawler visits to your site, helping to manage the load on your server and keep your website running smoothly.

Practical Usage of Robots.txt

Robots.txt is a powerful tool for guiding how search engines interact with your website. Here's how it can be practically used:

1. Prioritising Important Content: In the e-commerce industry, where a variety of products are sold online, Robots.txt is an important tool for focusing search engines on the most significant parts of a website. It guides these search engines to concentrate on key areas like the latest product listings or detailed service descriptions.

By directing search engines away from less important parts, such as old promotions or archived pages, e-commerce businesses ensure that the most relevant and current sections of their site are indexed. This strategic use of Robots.txt helps potential customers find the important parts of the website more easily in search results, improving the site's visibility and aligning with the business's SEO goals.

2. Avoiding Duplicate Content: In the publishing industry, where articles and news content are frequently updated, avoiding duplicate content is important for SEO. Robots.txt plays a key role in this by allowing publishers to block search engines from accessing and indexing pages with similar or repeated content.

For instance, if a publisher releases several articles on the same event with overlapping information, Robots.txt can be used to prevent search engines from indexing these duplicate pages. This ensures that the site's content remains unique and high-quality in search engine results, avoiding any penalties for content repetition and maintaining the integrity and relevance of the publisher's online presence.

3. Hiding Incomplete Pages: In the technology industry, where new products and software are constantly being developed, it's important to only show complete and polished content to the public. Robots.txt helps with this by hiding pages that are still in development or not ready to be seen from search engines.

For example, if a tech company is working on a new app or software feature that isn't finished yet, they can use Robots.txt to keep these pages out of search engine results. This makes sure that when potential customers search online, they only find and see the fully developed and ready-to-use products, maintaining a professional image for the company.

4. Protecting Sensitive Areas: In the healthcare industry, where confidentiality is key, it's important to keep certain parts of a website private, like patient login areas or administrative sections. Robots.txt is used to ensure that these sensitive areas are not accessible to search engines.

By setting up Robots.txt, healthcare websites can prevent these private sections from appearing in online search results. This helps maintain the site's security and protects the privacy of patients and healthcare staff, which is especially important in an industry where confidentiality and trust are of greatest importance.

User-agent Specifics

On the internet, different web crawlers, like Googlebot, Bingbot, Baidu Spider, and Yandex's crawler, each have their own specific roles in SEO. Googlebot, for example, is Google's main crawler, searching the web to refresh Google's search results. It even has special versions for images and news. By understanding and catering to Googlebot's needs, you can improve how your content appears in Google's search results.

Bingbot, which works for Microsoft's Bing search engine, prefers websites with good multimedia content and those that are mobile-friendly. If you're optimising for Bing, keeping Bingbot's preferences in mind is crucial.

For those targeting the Chinese market, the Baidu Spider, the main crawler for China's Baidu search engine, is key. Optimising your website for Baidu Spider can significantly increase your visibility in China.

In Russia, Yandex is the main search engine, and its own web crawler has unique requirements. Understanding these is important for businesses looking to reach Russian-speaking audiences.

While it's important to optimise your website for these specific crawlers, it's equally important to ensure you're not accidentally blocking others. A well-balanced Robots.txt file is essential to maintain a broad and effective online presence across different search engines.

Directives in Depth

In the context of a mid-market industry like retail, where a business might offer a wide range of products from clothing to electronics, using the "disallow" directive in Robots.txt can be particularly beneficial.

For instance, a retail website could use "disallow" to prevent search engines from indexing seasonal sale sections postseason or specific product pages that are out of stock or discontinued. This helps ensure that customers and search engines focus on currently relevant and available products.

Additionally, retail sites often have internal pages meant for staff use, such as employee login areas or inventory management systems. Using "disallow" in Robots.txt keeps these pages out of search engine results, enhancing site security and maintaining a streamlined, customer-focused online experience.

Wildcards (*) can offer more nuanced control in such a retail setting. A retailer could block URLs containing specific query parameters that lead to less relevant or redundant product listings. This precision in directing search engine crawlers helps to highlight the most important product categories or new arrivals, optimising the site's search visibility and user experience for a diverse customer base.

Crafting Your Robots.txt File

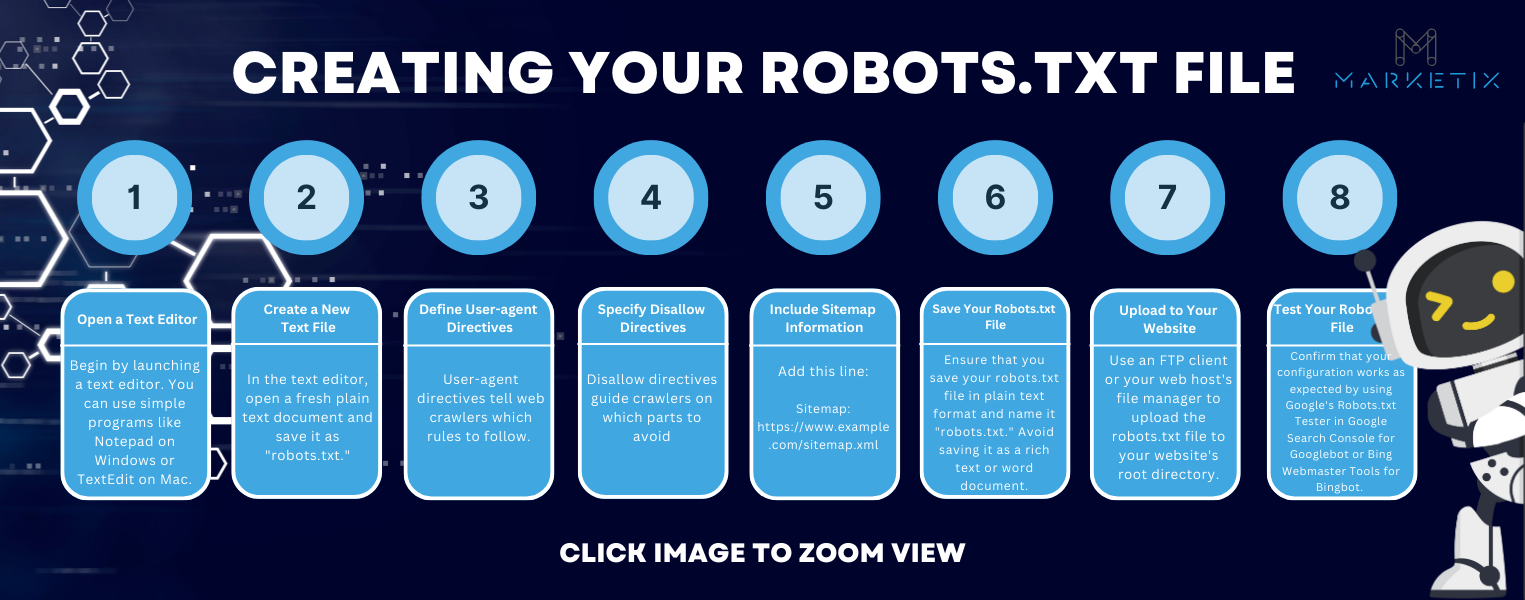

Creating your Robots.txt file doesn't have to be complicated. We'll guide you through it step by step. Here's how we'll do it:

Step 1: Open a Text Editor

Begin by launching a text editor. You can use simple programs like Notepad on Windows or TextEdit on Mac.

Step 2: Create a New Text File

In the text editor, open a fresh plain text document and save it as "robots.txt."

Step 3: Define User-agent Directives

User-agent directives tell web crawlers which rules to follow. To allow all web crawlers access, type this:

User-agent: *

Disallow:

To specify a particular crawler, such as Googlebot:

User-agent: Googlebot

Disallow:

Step 4: Specify Disallow Directives

Disallow directives guide crawlers on which parts to avoid. For example:

• To block a directory, like "/private/," use:

User-agent: *

Disallow: /private/

• To block a single page, like "/secret-page.html," use:

User-agent: *

Disallow: /secret-page.html

• To block all PDF files, use:

User-agent: *

Disallow: /*.pdf

• To block a URL parameter, such as "parameter=1234," use:

User-agent: *

Disallow: /*?parameter=1234$

Step 5: Include Sitemap Information (Optional)

To specify your XML sitemap's location, add this line:

Sitemap: https://www.example.com/sitemap.xml

Step 6: Save Your Robots.txt File

Ensure that you save your robots.txt file in plain text format and name it "robots.txt." Avoid saving it as a rich text or word document.

Step 7: Upload to Your Website

Use an FTP client or your web host's file manager to upload the robots.txt file to your website's root directory.

Step 8: Test Your Robots.txt File

Confirm that your configuration works as expected by using Google's Robots.txt Tester in Google Search Console for Googlebot or Bing Webmaster Tools for Bingbot. Test specific URLs to see if they are allowed or disallowed.

Regular Maintenance and Updates

Regular maintenance and updates of your Robots.txt file are essential in the dynamic world of SEO. Here's a deeper look at why and how to keep your file up to date:

• Adapting to SEO Changes: SEO constantly evolves, so your Robots.txt needs to keep up. For instance, if search engines change how they crawl websites, you may need to update your file to make sure the right pages are indexed.

• Growing with Your Website: As your website expands, say with a new blog or product section, your Robots.txt should be updated to reflect these changes. This makes sure that new parts of your site are properly included or excluded from search engine indexing.

• Avoiding Errors: An old Robots.txt file can cause miscommunication with web crawlers, leading them to miss important content. Regular reviews and updates help avoid this. For example, if you’ve reorganised your website but haven’t updated Robots.txt, crawlers might not find your newest content.

• Regular Checks and Updates: Consistently updating your Robots.txt ensures it matches current SEO practices. It’s also good to test your file after each update to ensure it’s working as intended, like confirming new rules are correctly blocking or allowing access.

• Staying Relevant: As SEO trends change, so should your Robots.txt file. If new types of content become significant for SEO, like videos, updating your file can help ensure these elements are considered by search engines.

Why You Need Robots.txt

Robots.txt is important for two main reasons in improving your website's SEO.

Firstly, it boosts your website’s visibility. It guides search engine crawlers to focus on the most important parts of your site, like your main products or services. This makes sure that these key areas are prominently indexed and appear in search results, giving your essential content the attention it needs.

Secondly, Robots.txt helps manage your website's crawl budget. Think of this as the effort and resources search engines use to explore and index your site. By using Robots.txt to indicate which parts of your site are less important, like outdated promotions or temporary pages, you ensure search engines prioritise indexing your most valuable content. This smart management of resources leads to more efficient and effective SEO results for your site.

Conclusion

This guide has provided you with a detailed understanding of Robots.txt and its role in SEO.

We’ve explored its background, key elements, how it’s used in practice, and how to tailor it for different search engines.

Now, you have the tools to effectively use Robots.txt to improve your website’s visibility in search engines. Remember, SEO is always changing, so continue applying what you’ve learned here and stay updated with new developments.

We invite you to share this helpful guide with your friends and colleagues.

If you have any questions or feedback, don't hesitate to reach out to our SEO agency. You can also explore more SEO-related content on our website to enhance your expertise further.

Recent Posts

Free Download SEO Book

Download our 24-page SEO book to learn:

- How SEO Really Works

- How to Rank #1

- Content & SEO

- Choosing an SEO Agency

Thank you!

You have successfully joined our subscriber list.

Recent Posts